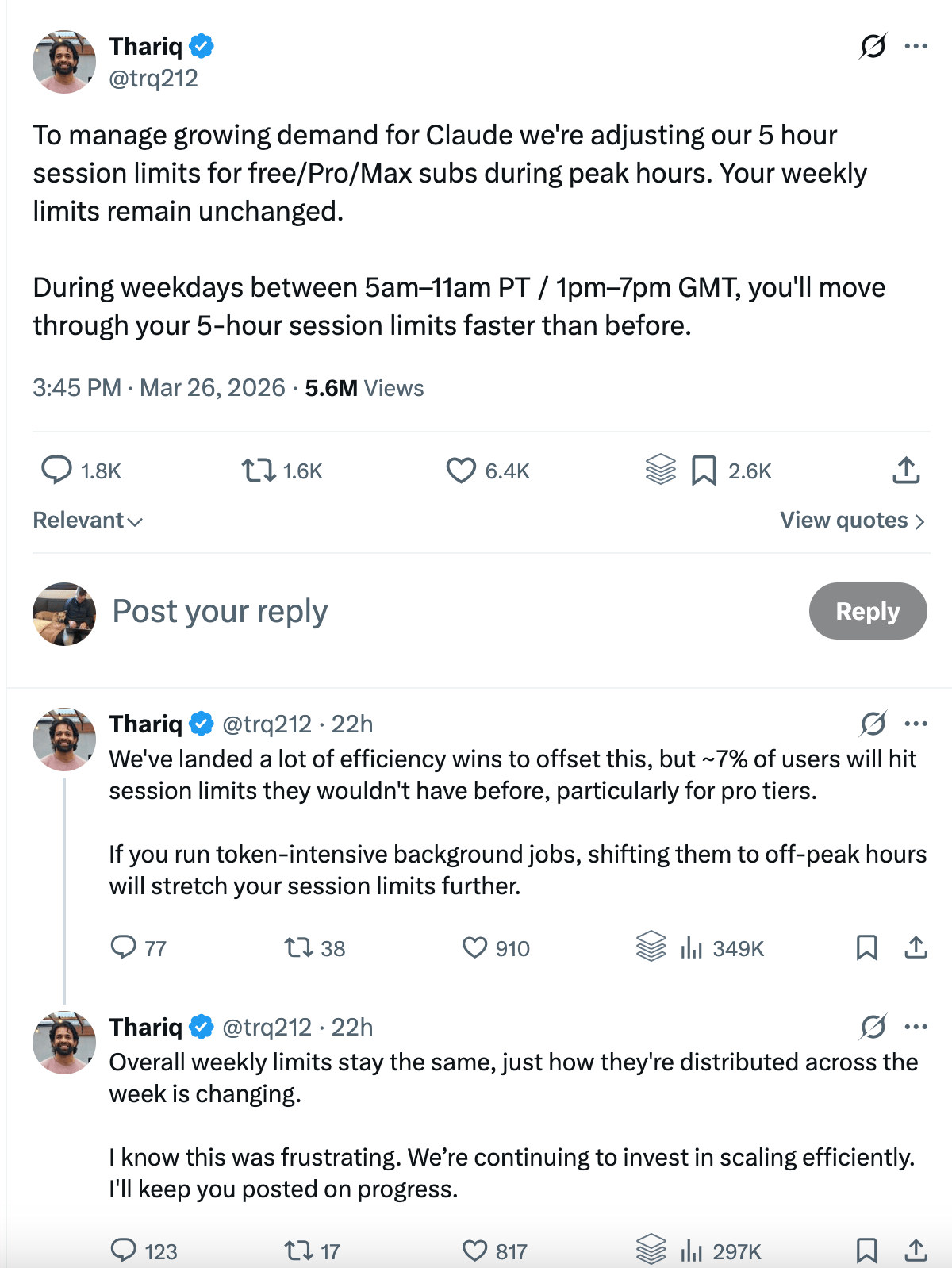

If you’re like me and use Claude Code as your daily driver you’ve definitely noticed that your credits have suddenly stopped going as far as they used to. This poor soul had the unfortunate task of relaying that news to X:

I did some research and found that Anthropic has not actually disclosed any specific numbers on how much different your 5 hour session limits will change besides that estimate of the change impacting 7% of users. I’m going to take a guess that over “7%” of you are part of that in that group based on my own experience…

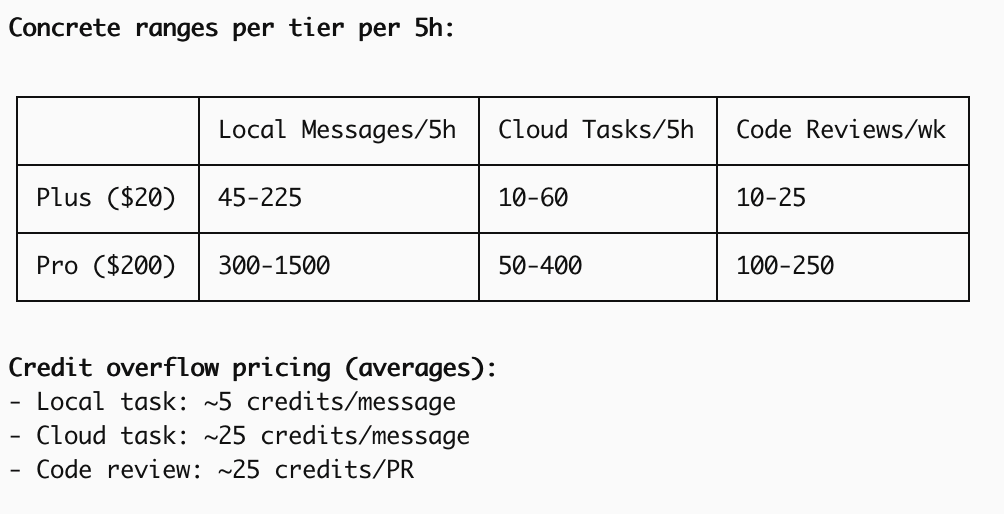

If you look further into what we actually know about our coding subscriptions, like how many tokens each plan actually gets, you’ll find that OpenAI is only slightly less opaque than Anthropic, providing broad ranges of the number of messages, tasks and code reviews you get per 5h period:

It seems obvious this week why the providers have been vague in their policies. They need to be able to adjust these limits as usage changes because they don’t have the infrastructure to meet the scaling demand. This goes back to my newsletter from last week going over NVIDIA’s $1T investment to meet GPU chip demand. There simply aren’t enough chips in place.

This comes at the same time as Bernie Sanders and AOC calling to halt datacenter development. Maybe the change is even related. It calls into question regulation needed for consumer transparency.

A similar situation to what we are seeing with our Claude Code usage limits is mobile carriers “unlimited” data plans that can actually be throttled with increased usage. They were eventually forced by the FCC to disclose the throttling thesholds even though consumers had already agreed they could be throttled when they signed up.

Anthropic, in their usage agreement, does not guarantee services will always be available and says they can modify the service however they want. At the same time as we have seen this change in their subscription policy, their API still has the same price and rate limits, which tells you which of their services they see as most flexible for them to change.

What will be interesting to see is if / when they change their policy back and if users leave the service for OpenAI. In the latter case, we may see OpenAI have to do the same thing. There are only so many GPUs in the world right now; there is a physical limit to what is possible.

I for one find myself putting slightly more thought into my prompts to Claude Code, questioning if I am giving it a task in the most efficient way and trying to avoid endless loops that burn tokens. That feels very contrary to how most people have been using Claude, though, which to me has never felt so brittle. Let us hope those data centers keep getting built.